Elastic AI: Web Crawler

Iván Frías Molina

1/29/2024

Elastic AI: Web Crawler

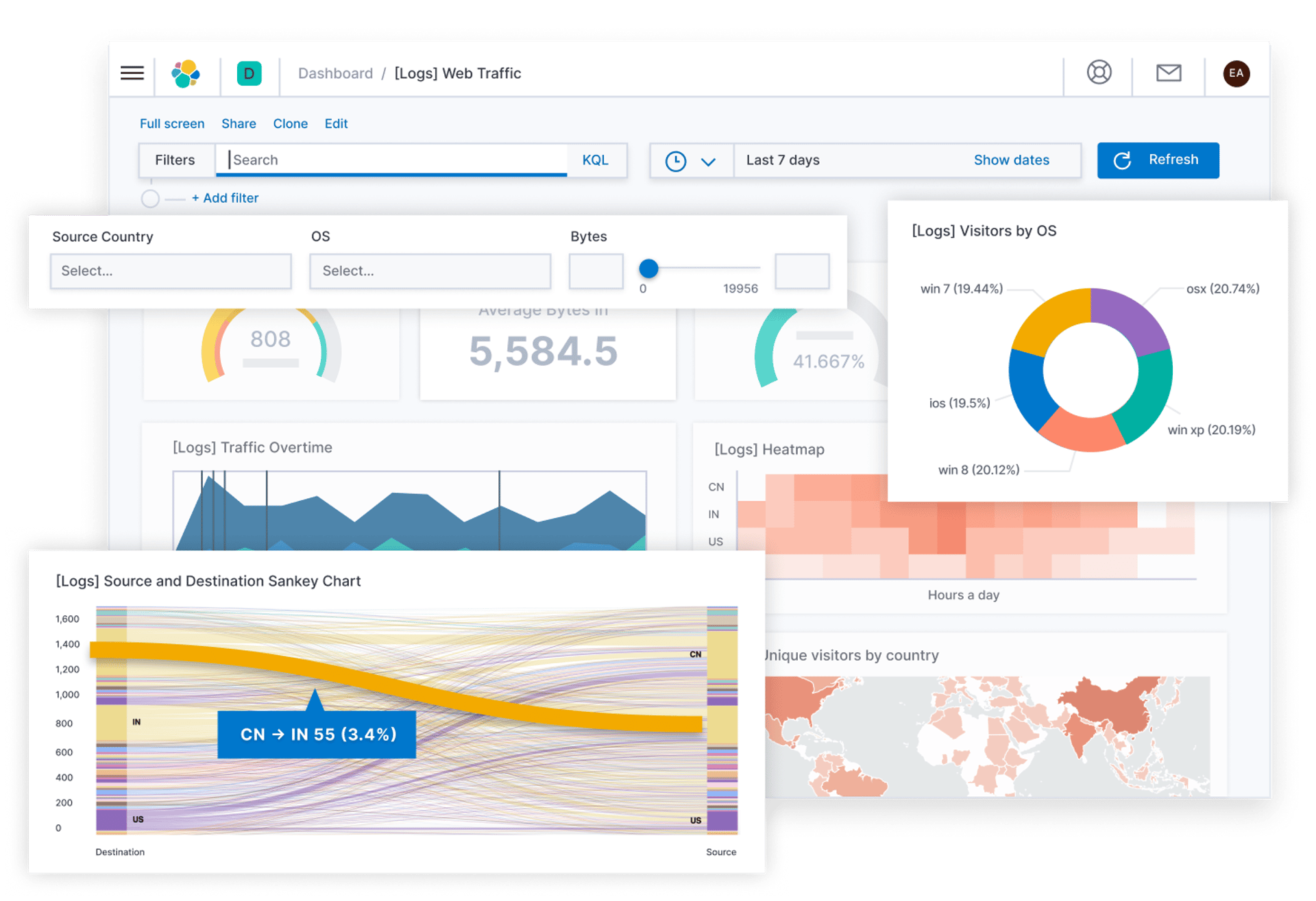

A Web Crawler is designed to crawl and index website data on the Elastic platform.

It automates the data extraction process and makes it easy to collect and analyze large amounts of information, uses advanced AI techniques to understand content, and enables accurate indexing and better understanding of content.

Thanks to the web crawler we can quickly integrate content, for subsequent search and analysis, the indexed data is transformed and processed using personalized models thanks to the pipelines and inference processing, in this we can use ELSER or any other model.

To configure the web crawler you must make sure that you have an “Enterprise Search” node with sufficient RAM memory capacity (at least 4GB), from the “Enterprise Search” – content – web crawler tab.

There is also a simple interface where we can consult the indexed data, this crawler was introduced in version 8.4.0, it is in all Elastic Cloud deployments, but in self-managed it requires a subscription.

Note that it is also possible to extract PDF and DOCX files, we can configure the schedule at specific intervals or specific dates, for example you can consider running the crawler every 7 days or on specific days at a certain time, for example 00:00 every day, you can also run it manually and modify formats by editing the ingestion pipeline.

Contact us

Whether you have a request, a query, or want to work with us, use the form below to get in touch with our team.

Byviz Analytics S.L.

Hours

I-V 9:00-18:00

VI - VII Closed