Elastic AI: Inferences

Iván Frías Molina

1/28/2024

Elastic IA: Inferences

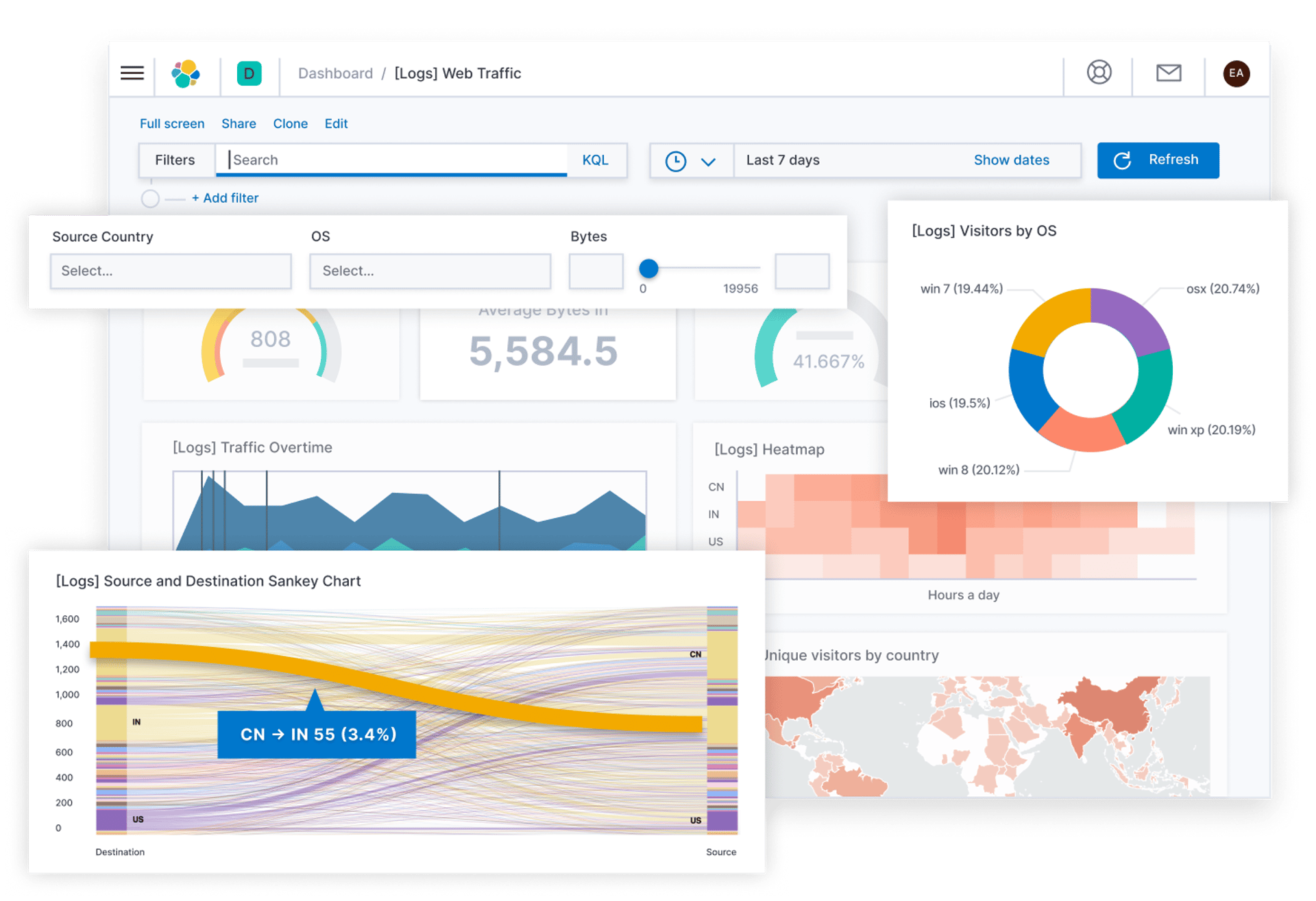

Inference is the process of applying a trained AI model to new data to obtain predictions or conclusions.

They are used to improve searches, analysis and interpretation of stored data.

The first step is to train a model using a data set, it is also possible to tune/improve the model so that it can perform specific tasks, such as classification, prediction or pattern recognition.

It is necessary to integrate the model into Elastic, this may involve converting the model into a compatible format, it is advisable to consult the documentation of the latest version to check compatibility.

When new data is ingested, the model is applied to this data, for this it is necessary to create an inference pipeline.

Inference pipelines are processes that are created in Elasticsearch using Ingest, it is necessary to have at least one node with this role.

If we are going to make intensive use of Ingest, it is advisable to establish a specific node to carry out these processing.

Thanks to inferences we can use it for NLP, ELSER, text embeddings, etc.

This is an example of basic inference, multiple inferences can also be used

This pipeline can be used with ELSER (explanation in the rest of the publications)

{

"inference": {

"model_id": "modelo_id",

"input_output": [

{

"input_field": "field_input",

"output_field": "field_output"

}

]

}

}

Contact us

Whether you have a request, a query, or want to work with us, use the form below to get in touch with our team.

Byviz Analytics S.L.

Hours

I-V 9:00-18:00

VI - VII Closed